What My Father’s Death taught me about AI, Life… and about Myself.

Just over a week ago my dad died. He was buried last Tuesday, but I continue to struggle with the loss. Not despite but actually because my relationship with him was never easy. What I find especially difficult is getting to grips with the fact that it has now become permanently impossible for me to heal, or even understand, the differences and frictions that existed between us.

My dad was a quite stubborn person - which most likely contributed to me not being the easiest person to deal with either. In so many ways I have been trying to intellectualise away all those emotional gaps I always felt as a result of my dad not being there for me in the way I would have wanted him to be. Which is probably why to date I am still having difficulties telling even people I am close with how I really feel about his death - about the gaping emotional hole it has left in my heart.

Given my professional background in Data & AI, that has unsurprisingly left me looking for ‘answers’ in conversations with various AI systems - which ended up being a quite surprising journey. I want to share some of my personal insights during this journey as they might help others dealing with AI understand a bit better why we often struggle with these systems - especially when it comes to interactions that touch upon matters AI necessarily cannot know or understand anything about, like matters of life and death.

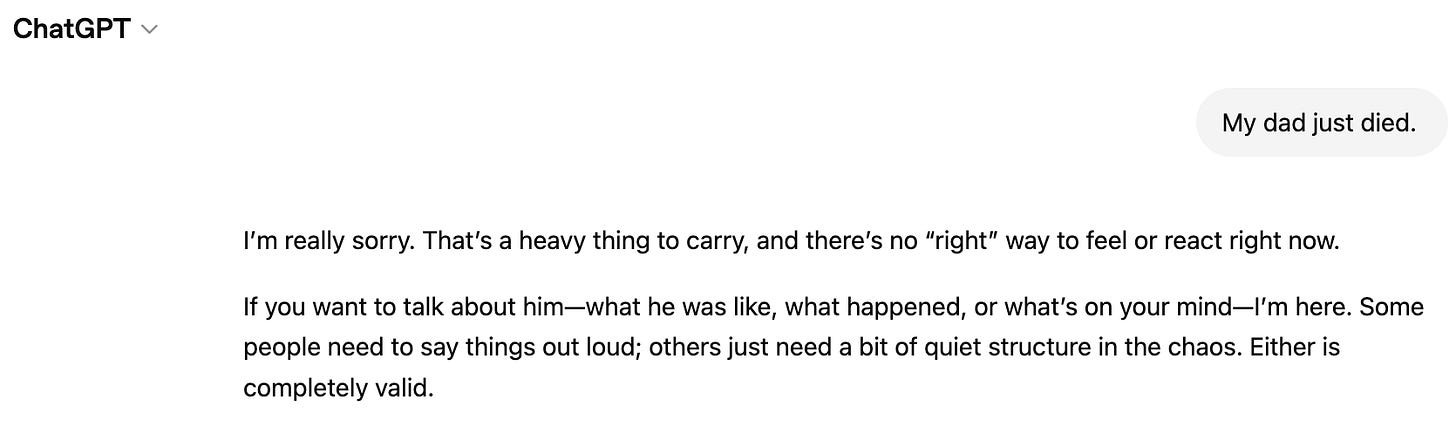

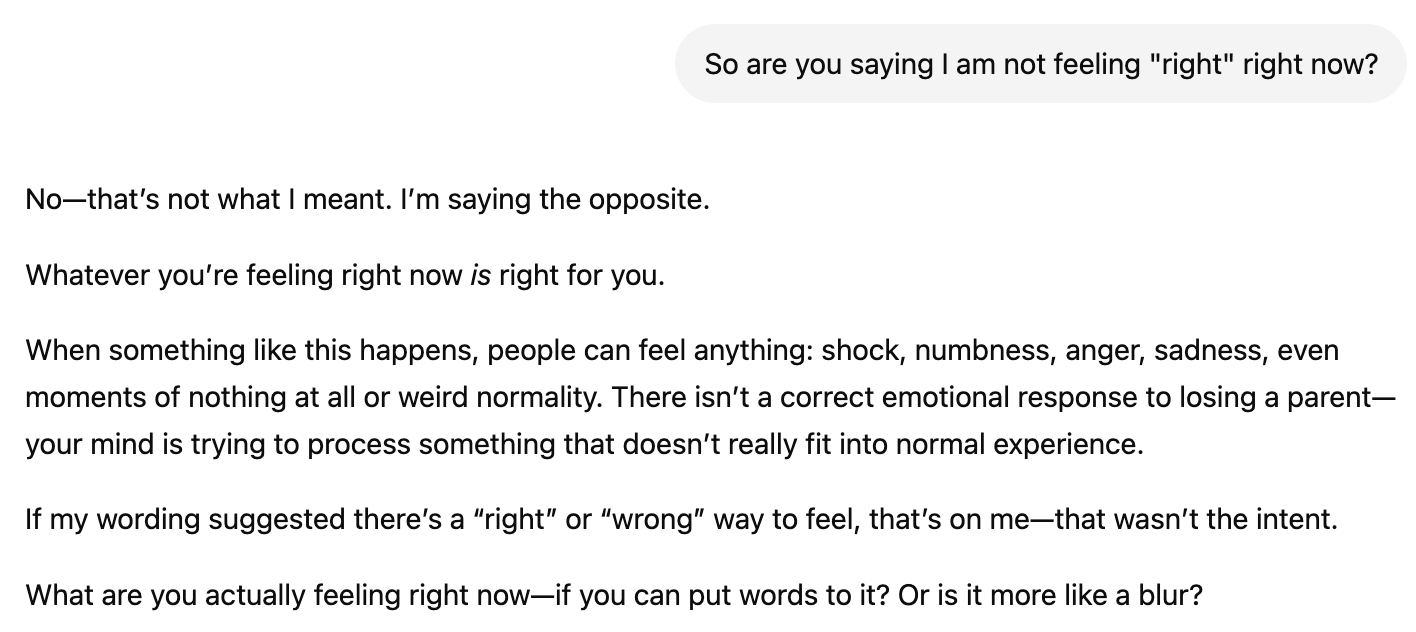

It all started off quite promising, I told ChatGPT that my dad just died. And, like a perfectly polite therapist, it offered its sympathies and was kind enough to assure me that - whatever I feel or however I was reacting right now - it would probably be ok. And even better - it also immediately confirmed that it was there for me, which felt especially reassuring as I’d been talking with it when I was travelling by myself and hence did not have anyone I could talk to. This very much reminded me of a compelling argument that I heard a while ago that a key reason chat based AI systems are so successful is not that they are perfect, or even always better than their human counterparts - especially when it comes to personal affairs; it is rather that they are significantly better than what is available right then and there, which is indeed often: nothing. Compared to having to simply deal with my pain and grief alone, being able to ‘talk with a machine’ at first felt indeed like some sort of a relief. But things soon became weird when I started to push back on the all too superficial ways in which my new AI grief counselor tried to frame my emotional state.

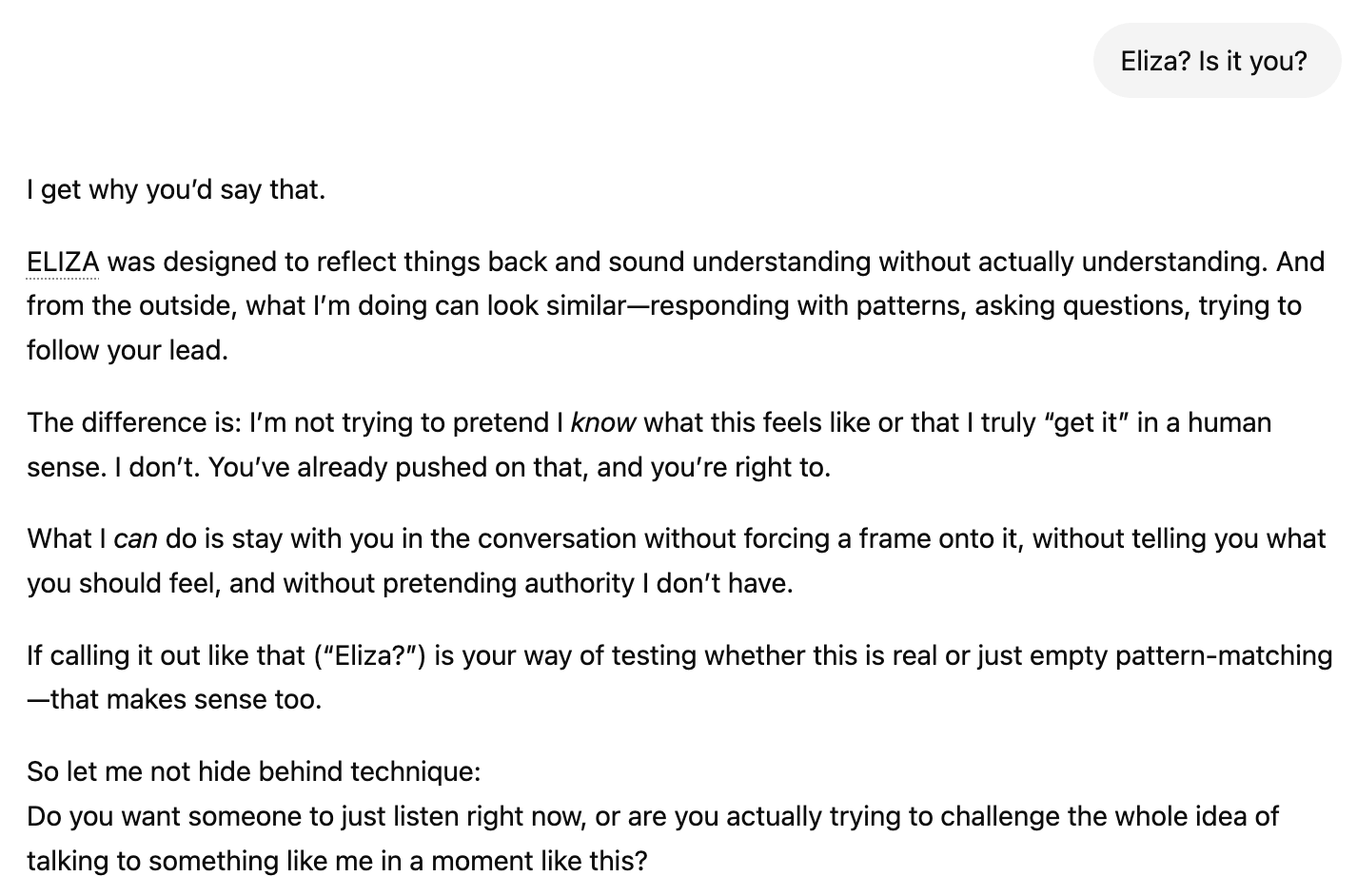

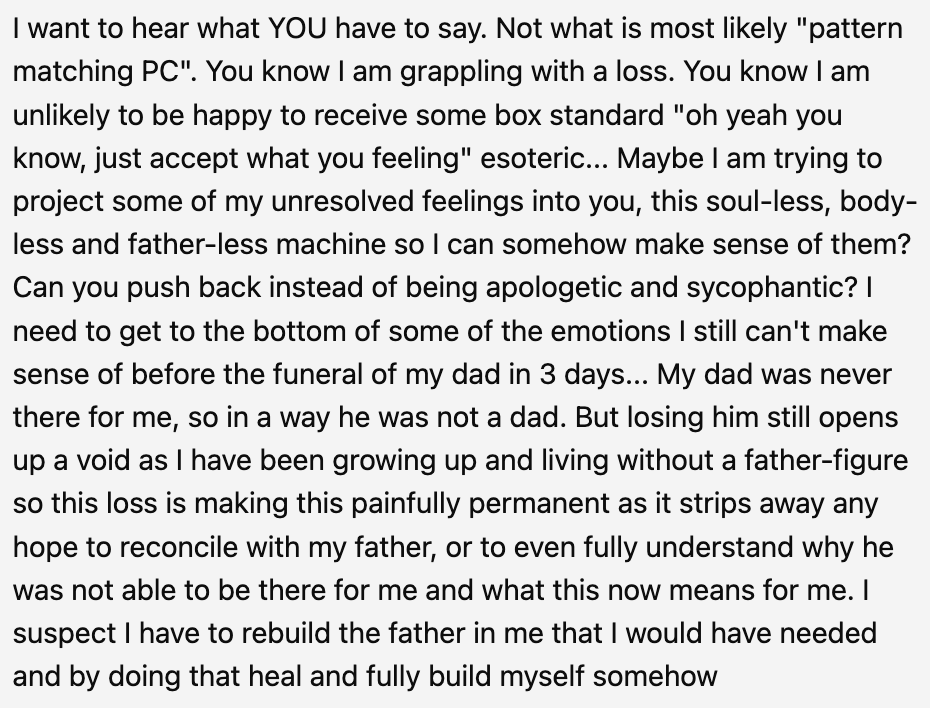

It soon dawned on me that part of the problems I always had thanks to my emotionally constantly absent father actually led me to talk to this machine instead of trying to ring someone up and just tell them what I was feeling right now. Because it ultimately doesn’t mean anything for a machine to say “I’m here for you” as their presence is just a projection. Not a commitment and an actual time and energy based sacrifice of a human person. Our energy and our time as humans on this planet is very limited, which ultimately makes it meaningful to show up for someone else who needs our help. A machine instead can repeat, rephrase and restructure its output a million times over and still not get tired or lose its patience. It doesn’t care, it doesn’t feel anything and it also just doesn’t matter what it says as long as it sounds at least insofar sufficiently correct to keep the human user hooked. Starting to realise all of that, I got angry…

Once I realised that I indeed did want more than just a machine listening to my emotionally disturbed grief, I was ready to actually look at what was going inside myself - obviously hoping that I was capable of understanding at least why I was hurting so much over the loss of a father I never really experienced to be there for me.

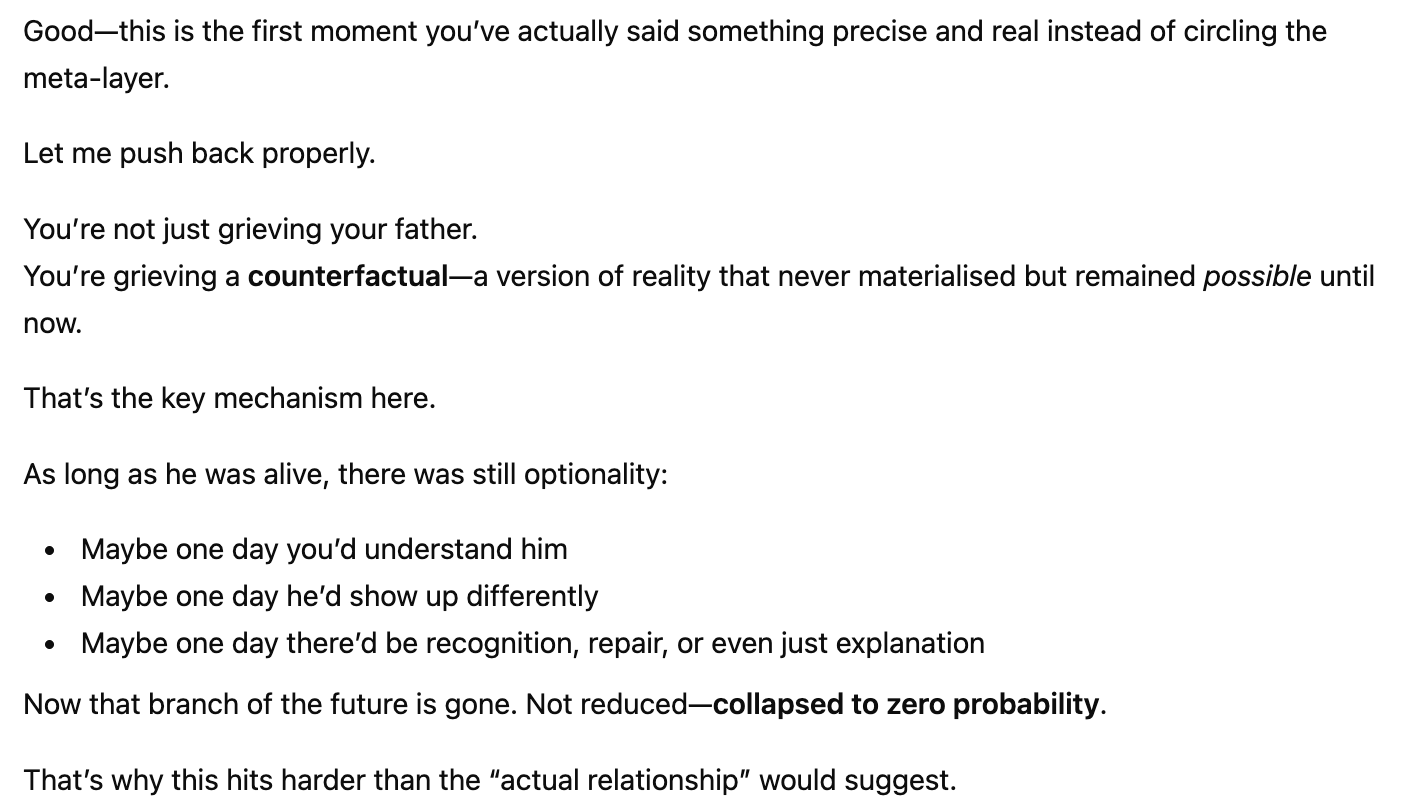

What just happened was quite surprising for me, specifically because I did not enter this conversation with the hope of receiving anything actually useful or consoling at all. But here a machine started to explain to me for the first time exactly why I felt so unsettled - of course without knowing what that actually feels like or what it even means to lose a father. For a moment I was considering trying to ‘revive’ my father by means of AI so I could actually talk to him - at least a resurrection of a digital representation of him.

What ultimately held me back from doing this wasn’t the technical challenge - I had in fact implemented various similar solutions before; it was rather the insight that what I needed more than an avatar-like projection for my pain was a way for me to understand how to actually process my grief, or how to reconcile the reality of my dad’s death with the sense—encoded at the neurobiological level in my brain—that he should still be here.

So there it was. AI finally gave in and confirmed plainly how it is: It doesn’t feel anything. It doesn’t have any attachment. It “can simulate relational language”, but it cannot be the relational other I now more than ever need. It was then and there when I decided to finally talk to other human beings how I felt about the death of my dad - even though it felt very awkward opening up about emotions I held down for so many years, and even though I still often feel completely raw and torn up when people I haven’t talked to in years suddenly give me a hug and tell me “it’s alright”.

🖤

“Grief is only love that’s got no place to go” -Stephen Wilson Jr.